Next-Generation Digital Stethoscopes: AI-Driven Remote Monitoring and Predictive Care

by Emmanuel Andres*, Noel Lorenzo-Villalba

Department of Internal Medicine, Service de Médecine Interne. Hôpitaux Universitaires de Strasbourg, 67000 Strasbourg, France

*Corresponding Author: Emmanuel Andres, Department of Internal Medicine, Service de Médecine Interne. Hôpitaux Universitaires de Strasbourg, 67000 Strasbourg, France

Received Date: 14 October 2025

Accepted Date: 18 October 2025

Published Date: 21 October 2025

Citation: Andres E, Lorenzo-Villalba N. (2025). Next-Generation Digital Stethoscopes: AI-Driven Remote Monitoring and Predictive Care. Ann Case Report. 10: 2431. https://doi.org/10.29011/2574-7754.102431

Abstract

Digital stethoscopes, enhanced with artificial intelligence (AI) and machine learning (ML), represent a transformative advancement in telemedicine by enabling automated, high-precision auscultation. Equipped with high-fidelity acoustic sensors and real-time signal processing, AI integration allows these devices to detect, classify, and interpret heart and lung sounds with diagnostic reliability comparable to expert clinicians. Their incorporation into telehealth platforms facilitates remote monitoring, early detection of cardiopulmonary abnormalities, and data-driven management of chronic diseases, particularly in resource-limited or decentralized care settings. Clinical evidence supports the equivalence of AI-assisted digital auscultation to traditional in-person evaluation, while offering added benefits of continuous monitoring, predictive analytics, and personalized acoustic profiling. Challenges to widespread adoption remain, including cost, clinician training, regulatory oversight, and data privacy, yet AI-powered digital stethoscopes are emerging as essential tools in connected healthcare, advancing equitable, responsive, and precision-driven patient care.

Keywords: Digital Stethoscope; Artificial Intelligence (AI); Machine Learning (ML); Cardio-Pulmonary Diagnosis; Telemedicine; Automated Auscultation; Remote Monitoring; Connected Healthcare; Predictive Analytics; Precision Medicine.

Introduction

Auscultation remains a cornerstone of clinical diagnosis, enabling assessment of cardiac, pulmonary, and gastrointestinal function. Traditional acoustic stethoscopes, since Laennec’s invention in 1816, are inherently subjective and reliant on clinician expertise [1]. Digital and electronic stethoscopes amplify and digitize physiological sounds, allowing real-time analysis, storage, and sharing. They integrate microphones, analog-to-digital converters, embedded processors, and often-wireless connectivity. Advanced features include noise reduction, intelligent filtering, and crucially, machine learning–based sound classification and pattern recognition [2-4]. Physiological signals are low-amplitude, broad-spectrum, and non-stationary, undergoing attenuation and distortion across tissues. Optimizing mechanical and electronic components diaphragms, tubing, microphones, and amplification circuits is essential to preserve signal fidelity across the 20-2000 Hz range under constraints of size, power, and latency [4,5].

Artificial intelligence (AI) now plays a central role, enabling automated detection of murmurs, arrhythmias, respiratory abnormalities, and other subtle pathologies. AI-driven stethoscopes support telemedicine, clinical decision-making, data archiving, and medical education, with performance approaching expert clinicians. Validation, usability, regulation, and privacy remain challenges, but AI integration represents a paradigm shift in auscultation [5,6].

This review emphasizes the physical, mechanical, and computational foundations of modern stethoscopes, highlighting how AI transforms auscultation by bridging biomedical engineering, signal processing, and clinical application, and guiding innovation in digital health.

Materials and Methods

This narrative review was conducted to evaluate the efficacy, reliability, and emerging role of digital stethoscopes enhanced by AI, compared with traditional acoustic devices. A systematic and comprehensive search was performed across PubMed, Embase, and the Cochrane Library, covering publications up to March 2025. Key search terms included “digital stethoscope,” “electronic stethoscope,” “digital auscultation,” “AI-assisted auscultation,” and “machine learning stethoscope.”

To ensure inclusion of high-quality evidence, single case reports and unpublished works in English or French were excluded. Reference lists of selected studies were systematically screened to identify additional relevant publications.

This methodology enabled a rigorous synthesis of current knowledge regarding the integration of AI algorithms in digital auscultation, including their potential to detect murmurs, arrhythmias, and respiratory abnormalities. The review highlights the technological features, clinical advantages, limitations, and implementation challenges of AI-driven digital stethoscopes, providing healthcare professionals with evidence-based guidance for adoption in routine diagnostic practice.

Biomedical Acoustics, Digital Stethoscope Design, and AI-Based Signal Analysis

Physiological sounds originate from mechanical and fluid dynamic processes within organ systems. Cardiac signals, such as S1 and S2, are produced by valve closures, while S3, S4, and murmurs reflect abnormal ventricular compliance or turbulent flow across pathological valves [1,6]. Pulmonary sounds arise from airflow through the bronchial tree and alveoli, with normal patterns including vesicular and bronchial breathing, and abnormal sounds such as crackles, wheezes, rhonchi, or stridor indicating airway pathology [7,8]. Vascular sounds, including bruits, result from turbulent flow in stenotic arteries [1]. These signals are low in amplitude (mPa–Pa) and broadband, spanning 20–150 Hz for cardiac sounds, 100–2000 Hz for pulmonary sounds, and 50–800 Hz for vascular sounds [1,6-8]. Their non-stationary nature necessitates time-frequency analysis, including STFT and wavelet transforms, for adaptive filtering and feature extraction [6].

Sound propagation is influenced by layered tissues—skin, fat, muscle, bone, and pleura—causing frequency-dependent attenuation, commonly modeled as α(f)=α0⋅fn\alpha(f) = \alpha_0 \cdot f^nα(f)=α0⋅fn, where n is tissue-specific [1,5]. Low-frequency components transmit more efficiently than high-frequency murmurs, and optimal acoustic coupling at the skin–sensor interface, via diaphragms or gels, is essential to maximize signal capture [1]. Digital stethoscope design benefits from physical modeling and numerical simulations, including FEM and BEM, to predict wave interactions, optimize sensor placement, and evaluate diaphragm vibrations for resonance and bandwidth, supporting personalized calibration and explaining inter-anatomical variability in auscultation [2,5,9].

The chest piece serves as the primary mechanical-acoustic interface. Its diaphragm, typically made from PET, silicone, or polyurethane, determines resonance and bandwidth, with thinner membranes favoring low-frequency detection and stiffer membranes enhancing high-frequency sensitivity [9,10]. Dual membranes or variable-pressure designs further expand frequency coverage [10,11]. Mechanical coupling and isolation from hand contact or ambient noise are critical for preserving low-amplitude signals [9,11]. Sensors convert mechanical vibrations into electrical signals. Modern devices predominantly use MEMS microphones for high SNR (>65 dB), flat frequency response, low power consumption, and durability, while piezoelectric sensors offer robustness and EMI resistance but limited high-frequency sensitivity; hybrid MEMS–piezoelectric systems are under development to optimize performance [10-12].

The analog front-end amplifies and filters signals prior to digitization, employing LNAs with gains of 20-60 dB, anti-aliasing filters tuned to 20-150 Hz (cardiac) or up to 2000 Hz (pulmonary), and impedance matching to prevent signal loss [9-11]. Digitization is performed with ADCs, typically 16-bit and sampling at 4–8 kHz, ensuring sufficient dynamic range and temporal resolution to capture murmurs and transient events [9-11]. Post-digitization, embedded microcontrollers, DSPs, or FPGAs perform real-time processing, including noise reduction, segmentation, envelope detection, and spectral analysis via FFT or wavelet decomposition [9,10]. Latency is maintained below 50 ms for real-time feedback, with data stored locally or streamed via wireless protocols for telemedicine applications, and energy-efficient components extending portable device battery life [9-11].

Robust signal processing is essential in noisy clinical environments. Band-pass filters and advanced methods, including adaptive LMS/RLS filtering and wavelet denoising, preserve physiological transients while reducing artifacts [10,11,13]. Segmentation identifies individual heartbeats or respiratory cycles, supporting diagnosis and classification. Cardiac segmentation uses hidden semi-Markov models (HSMM) trained on envelope energy, spectral flux, and zero-crossing rate, while respiratory cycles are detected via energy thresholds, zero-crossing, or Hilbert transform envelopes [6,13]. Time-domain metrics (RMS amplitude, peak intervals, heart rate variability) complement frequency-domain and time-frequency features (PSD, STFT, CWT/DWT, MFCCs) to enhance discrimination of murmurs, clicks, and crackles [9-11].

Nonlinear descriptors including Shannon and spectral entropy, fractal dimensions, Lyapunov exponents, and Hjorth parameters quantify signal complexity and irregularity, offering sensitivity to pathological changes in atrial fibrillation, valvular stenosis, and chronic obstructive pulmonary disease (COPD) [6,9,11,13]. Feature selection and dimensionality reduction, via PCA, LDA, or RFE, optimize model efficiency while preserving discriminative power [9-11]. Extracted features are input to machine learning classifiers, including SVMs, random forests, gradient-boosted trees, and ANNs, particularly CNNs applied to spectrograms or MFCC matrices for hierarchical feature learning [9-11]. Model performance is assessed using cross-validation and ROC analysis, measuring accuracy, sensitivity, specificity, and AUC [6,9,11]. Several commercial and academic digital stethoscopes now provide real-time AI-assisted feedback, alerting clinicians to abnormal cardiac or pulmonary sounds with validated classification scores [6,14].

This integrated framework-linking biomedical acoustics, mechanical design, digital signal processing, and AI-supports automated, reliable, and clinically actionable auscultation, bridging engineering, signal analysis, and medical practice.

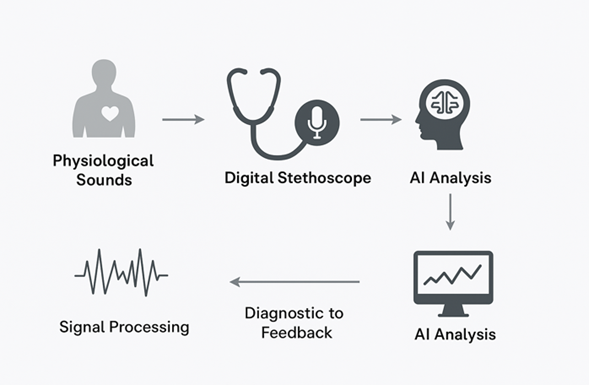

Figure 1: Workflow of AI-Enhanced Digital Auscultation. Schematic representation of the AI-enabled digital stethoscope workflow. Physiological sounds are captured by a digital stethoscope, digitized, and processed through AI algorithms for signal analysis and pattern recognition. The system provides real-time diagnostic feedback to clinicians, enhancing the accuracy and reliability of auscultation.

AI and ML in Digital Stethoscopes: Technological Advances and Clinical Evidence

1. Technological Advances in AI-Powered Digital Stethoscopes

Huang et al. (2023) conducted a comprehensive review of deep learning algorithms applied to lung sound analysis, highlighting the potential of AI to transform traditional auscultation practices [14]. Their study emphasized the use of convolutional neural networks (CNNs) to process two-dimensional spectrograms of lung sounds, facilitating the automatic detection of respiratory abnormalities such as wheezes, crackles, and rhonchi. Moreover, the authors provided an open-source framework to standardize algorithmic workflows, promoting reproducibility and enabling future development in this field.

In a practical implementation, Zhang et al. (2023) developed a low-cost, AI-powered stethoscope capable of diagnosing both cardiac and respiratory conditions [15]. Utilizing a lightweight model deployed on a Raspberry Pi Zero 2W, the system achieved remarkable performance metrics: 99.94% accuracy, 99.84% precision, 99.89% specificity, 99.66% sensitivity, and a 99.72% F1 score. These results demonstrate the feasibility of deploying high-performance diagnostic tools in resource-limited settings.

Lee et al. (2022) introduced a soft, wearable stethoscope designed for continuous, real-time auscultation [16]. The flexible device reduces motion artifacts and friction noise, enhancing the quality of recorded signals. Embedded machine learning algorithms enabled the classification of lung sounds and detection of abnormalities with 95% accuracy, highlighting potential applications in home-based monitoring and sleep studies.

Kim et al. (2023) reviewed the evolution of stethoscope technology, emphasizing the integration of ML algorithms into wearable and digital devices for automated detection of heart sounds [17]. These AI-powered stethoscopes facilitate identification of murmurs, abnormal rhythms, and other pathological cardiac signals while providing real-time feedback and enabling remote monitoring.

Jiang et al. (2024) leveraged deep learning to process heart sounds and detect valvular heart disease automatically [18]. The model achieved high diagnostic performance, with sensitivity and specificity exceeding 90%, demonstrating accuracy comparable to expert cardiologists and highlighting the utility of AI-assisted auscultation in standardizing cardiac assessments.

2. Evidence from Recent Clinical Studies

Accurate detection and classification of cardiopulmonary abnormalities in pediatric, neonatal, and adult populations remain a central challenge in clinical practice. AI-enhanced digital stethoscopes have demonstrated substantial improvements in this domain. In pediatric cardiology, Zhou et al. developed a deep learning algorithm for multi-class classification of heart sounds, achieving AUC values of 0.92 for normal sounds, 0.83 for innocent murmurs, and 0.88 for pathological murmurs, providing objective, reproducible, and reliable murmur classification [19]. Similarly, digital stethoscopes have improved early detection of abnormal pediatric breath sounds. Kevat et al. reported higher sensitivity for wheeze detection compared to traditional auscultation, achieving 100% concordance for crackle identification [20], while Zhou et al. demonstrated enhanced recognition of wheezes, crackles, and stridor through objective analysis of sound features [21]. The PERCH study further demonstrated the utility of digital stethoscopes in low-resource settings for pediatric pneumonia diagnosis, reliably capturing crackles and wheezes in children aged 1-59 months, highlighting their potential as cost-effective, non-invasive diagnostic tools [22]. In neonates, Grooby et al. applied a random under sampling boosting classifier to digital stethoscope recordings, achieving 85% specificity, 66.7% sensitivity, and 81.8% overall accuracy for early detection of respiratory distress, underscoring the potential for timely clinical intervention [23].

Beyond pediatric and neonatal applications, AI-powered digital stethoscopes have advanced the detection of cardiac murmurs, valvular heart disease, and asymptomatic cardiovascular dysfunction in adults and obstetric populations. Chorba et al. trained a deep learning model on over 34 hours of heart sound recordings, achieving 76.3% sensitivity and 91.4% specificity for murmur detection, with improved sensitivity for moderate-to-severe aortic stenosis (93.2%) and mitral regurgitation (66.2%) [24]. In obstetric populations, AI-guided screening increased detection of peripartum left ventricular systolic dysfunction from 2.0% to 4.1% in over 1,200 women [25], demonstrating enhanced cardiovascular monitoring in low-resource settings. In adults, Guo et al. validated a CNN model combining digital stethoscope and single-lead ECG data, achieving an AUC of 0.85 with 77.5% sensitivity and 78.3% specificity for detecting reduced ejection fraction (≤40%) [25]. Pulmonary applications have also benefited from AI integration: Glangetas et al. [26] and Siebert et al. [27] employed CNN and LSTM models to analyze lung sounds, achieving AUC values greater than 0.80 for interstitial lung diseases (ILD) and COPD detection, while facilitating early diagnosis and prognosis of COVID-19 in decentralized care environments. Collectively, these studies demonstrate that AI-assisted digital auscultation provides a scalable, objective, and clinically actionable approach to cardiopulmonary evaluation across diverse patient populations.

Tables 1 and 2 summarize the significant advances achieved through artificial intelligence (AI) and machine learning (ML) in human auscultation and digital stethoscope technology. (Table 1) focuses on technological developments, highlighting AI and ML algorithms, device types, and performance metrics for cardiac and pulmonary sound analysis. (Table 2) presents evidence from clinical studies, including pediatric, neonatal, obstetric, and adult populations, and illustrates how AI-enhanced digital stethoscopes improve diagnostic accuracy, sensitivity, and specificity compared to traditional auscultation.

|

Study |

Application |

Algorithm / Model |

Device Type |

Key Performance Metrics |

|

Huang et al., 2023 |

Lung sound classification |

CNN on 2D spectrograms |

Digital stethoscope |

Automatic detection of wheezes, crackles, rhonchi; framework for reproducibility |

|

Zhang et al., 2023 |

Cardiac & respiratory disease detection |

Lightweight AI model (Raspberry Pi Zero 2W) |

Low-cost digital stethoscope |

Accuracy: 99.94%, Precision: 99.84%, Sensitivity: 99.66%, Specificity: 99.89%, F1: 99.72% |

|

Lee et al., 2022 |

Continuous real-time auscultation |

Embedded ML algorithms |

Soft wearable stethoscope |

Accuracy: 95% |

|

Kim et al., 2023 |

Automated heart sound analysis |

ML algorithms for real-time murmur/rhythm detection |

Wearable digital stethoscope |

Real-time feedback and remote monitoring |

|

Jiang et al., 2024 |

Valvular heart disease detection |

Deep learning on heart sounds |

Digital stethoscope |

Sensitivity & specificity >90% |

Table 1: Technological Advances in AI- and ML-Powered Digital Stethoscopes.

|

Study |

Population |

Clinical Application |

Algorithm / Model |

Key Performance Metrics |

|

Zhou et al., 2023 |

Pediatric patients |

Heart murmur classification |

Deep learning multi-class model |

AUC: 0.92 (normal), 0.83 (innocent), 0.88 (pathologic) |

|

Kevat et al., 2023 |

Pediatric patients |

Detection of abnormal breath sounds |

Digital stethoscope signal analysis |

100% concordance for crackles, higher sensitivity for wheezes than clinician auscultation |

|

PERCH Study, 2023 |

Children 1–59 months |

Pneumonia diagnosis |

Digital stethoscope |

Reliable detection of crackles and wheezes; higher prevalence in severe pneumonia |

|

Grooby et al., 2023 |

Term newborns |

Neonatal respiratory distress detection |

RUSBoost classifier |

Accuracy: 81.8%, Sensitivity: 66.7%, Specificity: 85.0% |

|

Chorba et al., 2023 |

Adult patients |

Cardiac murmurs & valvular disease |

Deep learning |

Murmur detection: Sensitivity 76.3%, Specificity 91.4%; Aortic stenosis: Sensitivity 93.2%, Specificity 86.0%; Mitral regurgitation: Sensitivity 66.2%, Specificity 94.6% |

|

Adedinsewo et al., 2023 |

Pregnant/postpartum women |

Screening for LV systolic dysfunction |

AI-guided digital auscultation |

LVSD detection: 4.1% vs 2.0% in control |

|

Guo et al., 2023 |

Adults |

Asymptomatic LV systolic dysfunction |

CNN on digital stethoscope + ECG |

AUC 0.85, Sensitivity 77.5%, Specificity 78.3% |

|

Siebert et al., 2023 |

Adult patients |

ILD and COPD diagnosis |

CNN + LSTM models on lung sounds + LUS |

AUC > 0.80 |

|

Glangetas et al., 2023 |

COVID-19 patients |

COVID-19 diagnosis and prognosis |

Deep learning on lung sounds |

Early detection and severity prediction; supports telemedicine |

Table 2: Clinical Evidence of AI-Enhanced Digital Stethoscopes.

Collectively, these tables demonstrate the transformative impact of AI and ML on auscultation, providing objective, reproducible, and scalable tools for the detection of heart and lung pathologies. The integration of AI-driven analysis into digital stethoscopes not only augments clinician capabilities but also enables early detection and intervention, particularly in resource-limited or remote settings.

Future Perspectives of AI in Digital Stethoscopes

The evolution of digital stethoscopes is increasingly driven by AI and ML, which enhance diagnostic accuracy, automate signal interpretation, and enable predictive, precision-driven healthcare. Flexible and wearable devices—including epidermal acoustic sensors and piezoelectric wearables—leverage AI to process high-fidelity heart and lung sounds in real time, reduce noise, and classify physiological signals, supporting continuous monitoring in pediatric, neonatal, and chronic care populations [28-30].

Multimodal data fusion-combining ECG, oxygen saturation, respiratory rate, and motion sensors-enables AI models to detect complex pathologies, such as early heart failure exacerbations, while personalized acoustic baselines allow longitudinal tracking of subtle changes in murmurs or wheezes, improving sensitivity and reducing false positives. These capabilities transform digital auscultation into a cornerstone of remote monitoring and telemedicine, facilitating connected healthcare by integrating patient data across distributed networks.

Integration with augmented reality (AR), electronic health records (EHRs), and Internet of Medical Things (IoMT) standards (FHIR, HL7, DICOM-WAVE, IEEE 11073) supports real-time visualization, secure data streaming, and predictive analytics at both individual and population levels. This allows clinicians to implement data-driven decision-making, anticipate disease progression, and deliver precision medicine tailored to each patient’s physiological profile [28-33].

Although challenges such as device variability, noise sensitivity, and the limited interpretability of deep learning models remain, standardizing algorithms and datasets, along with interdisciplinary collaboration among clinicians, engineers, and data scientists, will be crucial to fully harness AI’s potential in auscultation. Ultimately, AI-powered digital stethoscopes promise to enable early detection, continuous remote monitoring, and seamless integration within connected healthcare ecosystems, transforming traditional auscultation into a proactive, predictive, and patient-centered diagnostic tool.

Conclusion

The Central Role of AI

AI is transforming the digital stethoscope from a purely acoustic instrument into a data-driven diagnostic platform. By combining high-fidelity acoustic sensors with machine learning algorithms, these devices enhance the detection and classification of subtle cardiac and pulmonary abnormalities, enable personalized monitoring, and support predictive, preventive, and precision healthcare. Integrated into telemedicine platforms, AI-powered stethoscopes facilitate remote monitoring and continuous patient assessment, expanding access to high-quality care in decentralized and resource-limited settings.

Clinical studies have demonstrated the utility of AI-assisted auscultation across pediatric, neonatal, obstetric, and adult populations, enabling early detection of heart murmurs, valvular disease, respiratory distress, and chronic pulmonary conditions. Future developments-including wearable and flexible sensors, multimodal biosensing, augmented reality overlays and IoMT integration-promise to make auscultation continuous, automated, context-aware, and tightly integrated within connected healthcare ecosystems.

Despite these advances, challenges remain, including cost, clinician training, data privacy, and equitable access. Interdisciplinary collaboration among clinicians, engineers, and data scientists is essential to fully leverage AI’s potential in telemedicine, predictive analytics, and precision medicine, ultimately transforming traditional auscultation into a continuous, patient-centered diagnostic tool.

This work honors Mr. Raymond Gass, whose pioneering studies in Strasbourg laid the foundation for digital pulmonary auscultation, now amplified by AI and ML technologies.

Conflicts of Interest: No conflicts directly related to this work. Professor E. Andrès has conducted institutional research funded by Alcatel-Lucent and the French National Technology Agency.

Funding: French National Technology Agency.

References

- Tavel ME. (2018). Cardiac auscultation: a glorious past—and it does have a future. Circulation. 138: 117-119.

- Silverman B, Balk M. (2019). Digital stethoscope-improved auscultation at the bedside. Am J Cardiol. 23: 984-985.

- Andres E, Gass R, Brandt C. (2015). Etat de l’art sur les stéthoscopes électroniques en 2015. Médecine Thérapeutique. 21: 319-332.

- Choudry M, Stead TS, Mangal RK, Ganti L. (2022). The history and evolution of the stethoscope. Cureus. 14: e28171.

- Seah JJ, Zhao J, Wang DT, Lee HP. (2023). Review on the advancements of stethoscope types in chest auscultation. Diagnostics (Basel). 13: 1545.

- Reichert S, Gass R, Andres E. (2007). Analyse des sons auscultatoires pulmonaires. ITBM-RBM. 28: 169-180.

- Sovijarvi ARA, Vanderschoot J, Earis JE. (2000). Standardization of computerized respiratory sound analysis. Eur Respir Rev. 10: 647-649.

- Sovijarvi AR, Malmberg LP, Charbonneau G, Vanderschoot J, Dalmasso F, et al. (2000). Characteristics of breath sounds and adventitious respiratory sounds. Eur Respir Rev. 10: 591-596.

- Nowak LJ, Nowak KM. (2018). Sound differences between electronic and acoustic stethoscopes. Biomed Eng Online. 17: 104.

- Watrous RL, Grove DM, Bowen DL. (2002). Methods and results in characterizing electronic stethoscopes. Computers in Cardiology. 2002: 1-4.

- Landge K, Kidambi BR, Singal A, Basha A. (2018). Electronic stethoscopes: brief review of clinical utility, evidence, and future implications. J Pract Cardiovasc Sci. 4: 65.

- Ghazali FAM, Hasan N, Rehman T, Nafea M, Ali MSM, et al. (2020). MEMS actuators for biomedical applications: a review. J Micromechanics Microengineering. 30: 073001-073020.

- Koning C, Lock A. (2021). A systematic review and utilization study of digital stethoscopes for cardiopulmonary assessments. JMRI. 5: 1-11.

- Huang DM, Huang J, Qiao K, Zhong NS, Lu HZ, et al. (2023). Deep learning-based lung sound analysis for intelligent stethoscope. Mil Med Res. 10: 44.

- Zhang M, Li M, Guo L, Liu J. (2023). A low-cost AI-empowered stethoscope and a lightweight model for detecting cardiac and respiratory diseases from lung and heart auscultation sounds. Sensors (Basel). 23: 2591.

- Lee SH, Kim YS, Yeo MK, Mahmood M, Zavanelli N, et al. (2022). Fully portable continuous real-time auscultation with a soft wearable stethoscope designed for automated disease diagnosis. Sci Adv. 8: eabo5867.

- Kim Y, Hyon Y, Woo SD, Lee S, Lee SI, et al. (2023). Evolution of the stethoscope: advances with the adoption of machine learning and development of wearable devices. Tuberc Respir Dis (Seoul). 86: 251-263.

- Jiang Z, Song W, Yan Y, Li A, Shen Y, et al. (2024). Automated valvular heart disease detection using heart sound with a deep learning algorithm. Int J Cardiol Heart Vasc. 51: 101368.

- Zhou G, Chien C, Chen J, Luan L, Chen Y, et al. (2024). Identifying pediatric heart murmurs and distinguishing innocent from pathologic using deep learning. Artif Intell Med. 07: 102867.

- Kevat AC, Kalirajah A, Roseby R. (2017). Digital stethoscopes compared to standard auscultation for detecting abnormal paediatric breath sounds. Eur J Pediatr. 176: 989-992.

- McCollum ED, Park DE, Watson NL, Buck WC, Bunthi C, et al. (2017). Listening panel agreement and characteristics of lung sounds digitally recorded from children aged 1-59 months enrolled in the Pneumonia Etiology Research for Child Health (PERCH) case-control study. BMJ Open Respir Res. 4: e000193.

- Grooby E, Sitaula C, Tan K, Zhou L, King A, et al. (2022). Prediction of neonatal respiratory distress in term babies at birth from digital stethoscope recorded chest sounds. Annu Int Conf IEEE Eng Med Biol Soc. 07: 4996-4999.

- Chorba JS, Shapiro AM, Le L, Maidens J, Prince J, et al. (2021). Deep learning algorithm for automated cardiac murmur detection via a digital stethoscope platform. J Am Heart Assoc. 10: e019905.

- Adedinsewo DA, Morales-Lara AC, Afolabi BB, Kushimo OA, Mbakwem AC, et al. (2024). Artificial intelligence guided screening for cardiomyopathies in an obstetric population: a pragmatic randomized clinical trial. Nat Med. 30: 2897-2906.

- Guo L, Pressman GS, Kieu SN, Marrus SB, Mathew G, et al. (2025). Automated detection of reduced ejection fraction using an ECG-enabled digital stethoscope: a large cohort validation. JACC Adv. 4: 101619.

- Glangetas A, Hartley MA, Cantais A, Courvoisier DS, Rivollet D, et al. (2021). Deep learning diagnostic and risk-stratification pattern detection for COVID-19 in digital lung auscultations: clinical protocol for a case-control and prospective cohort study. BMC Pulm Med. 21: 103.

- Siebert JN, Hartley MA, Courvoisier DS, Salamin M, Robotham L, et al. (2023). Deep learning diagnostic and severity-stratification for interstitial lung diseases and chronic obstructive pulmonary disease in digital lung auscultations and ultrasonography: clinical protocol for an observational case-control study. BMC Pulm Med. 23: 191.

- Lakhe A, Sodhi I, Warrier J, Sinha V. (2016). Development of digital stethoscope for telemedicine. J Med Eng Technol. 40: 20-24.

- Yilmaz G, Rapin M, Pessoa D, Rocha BM, de Sousa AM, et al. (2020). A wearable stethoscope for long-term ambulatory respiratory health monitoring. Sensors (Basel). 20: 1-18.

- Razvadauskas H, Vaiciukynas E, Buskus K, Arlauskas L, Nowaczyk S, et al. (2024). Exploring classical machine learning for identification of pathological lung auscultations. Comput Biol Med. 01: 107784.

- Duggan D, Sarana V, Factor A, Shelevytska V, Temko A, et al. (2024). Towards a wearable, high precision, multi-functional stethoscope. Annu Int Conf IEEE Eng Med Biol Soc. 2024: 1-4.

- Kono Y, Miura K, Kasai H, Ito S, Asahina M, et al. (2024). Breath measurement method for synchronized reproduction of biological tones in an augmented reality auscultation training system. Sensors (Basel). 24: 5.

- Das A, Adams K, Stoicoiu S, Kunhiabdullah S, Mathew A. (2024). Amplification of heart sounds using digital stethoscope in simulation-based neonatal resuscitation. Am J Perinatol. 41: e2485-e2488.

© by the Authors & Gavin Publishers. This is an Open Access Journal Article Published Under Attribution-Share Alike CC BY-SA: Creative Commons Attribution-Share Alike 4.0 International License. Read More About Open Access Policy.