Incorporating AI in Hospital Care and Medical Education: Prospects and Perils

by de Silva PN1*, de Silva LM2, Johnston AJ2

1Monkwearmouth Hospital, Newcastle Road, Sunderland SR5 1NB, UK.

2School of Medicine, University of Leeds, Leeds, UK

*Corresponding author: Prasanna N. De Silva, Monkwearmouth Hospital, Newcastle Road, Sunderland SR5 1NB, UK.

Received Date: 25 March, 2026

Accepted Date: 01 April, 2026

Published Date: 03 April, 2026

Citation: de Silva PN, de Silva LM, Johnston AJ (2026) Incorporating AI in Hospital Care and Medical Education: Prospects and Perils. Curr Trends Intern Med 10: 254. DOI: https://doi.org/10.29011/2638-003X.100254

Abstract

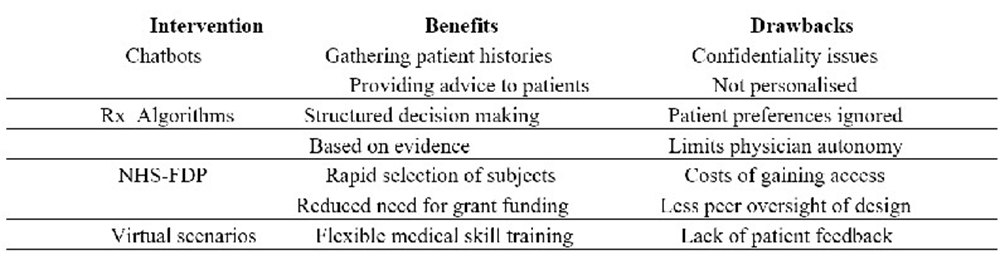

This article touches on the benefits and risks of artificial intelligence (AI) applications being developed for clinical practice and medical training. After outlining the developmental history of AI, examples of pilot projects are described, including ongoing projects in Newcastle and Liverpool that employ history-taking chatbots. The article also investigates AI’s role in medical training and novel research paradigms for case-control studies. Finally, risks and associated ethical issues are highlighted such as misinformation, increased costs and potential job losses during this technological transition.

Keywords: Artificial Intelligence, Large Language Models, Chatbots, Simulation training, Ethics in AI

Introduction – Developmental History of Ai

Alan Turing introduced the concept of ‘thinking machines’ [1], establishing the foundation for what would later be termed ‘machine learning’, an interdisciplinary field that integrates physics, statistics, and mathematics. Over the following three decades, advancements in computer technology, including the transition from ‘floppy discs’ to ‘circuit chips’, enabled research groups to develop AI systems with improved architectures, thereby enhancing accessibility and user experience [2].

- Financial developments

Over the last 10 years, corporate investors (especially hedge funds) have financed AI development with the expectation of revenue from subscriber rentals and advertising. Developments have included the collection of extensive databases of words and images. Large Language models (LLMs) have been ‘trained’ to predict the next word (such as a diagnostic label) following prompts by the user.

However, there is increasing awareness among market analysts that excess investment has been injected into LLM improvements, despite poor returns. There is now a likelihood of investment transfer to more lucrative areas such as defence technologies. This could result in friction in implementing AI solutions in hospital care.

- Artificial General Intelligence

More recently, the AI field has been aiming towards ‘Artificial General Intelligence’ (AGI); the capacity for AI models to replicate human thinking, demonstrating generalisation, reasoning and continuous experiential learning [3]. Researchers had hoped that AGI would occur when maximum data was used to train LLMs. However, despite this data acquisition, progress towards AGI has plateaued, leading some researchers to question the validity of the concept.

AI in European Hospitals

Regarding AI in hospital services, the World Health Organisation has recently charted AI approaches being developed in European healthcare [4]. Of the 60 member states, 32 already use AI-assisted diagnostics (for example, in stroke detection and breast cancer screening). Around 30 states have introduced chatbots for patient engagement. However, challenges caused by legal uncertainty, such as the use of previously copyrighted material for training chatbots, were cited by 43 states, alongside financial limitations.

Examples of AI Chatbots and algorithms

A chatbot is a computer program that responds to written or spoken questions but cannot explain the reasoning behind its answers. Chatbots are trained using large databases containing words and images. This training process employs machine learning, natural language processing, and extensive datasets. AI chatbots, powered by LLMs such as ChatGPT, utilise advanced machine learning techniques to manage a broad range of questions and adapt through user interactions.

• Memory services

A team from Newcastle University is currently evaluating the performance of an in-house chatbot that can obtain collateral histories from carers of people with memory problems (Robertson, Harrison et.al. 2025. This bot is sufficiently advanced to pick up 4 dementia subtypes: Alzheimer’s, Vascular, Fronto-Temporal and Lewy Body disease. There is no current functionality to recognise mixed dementias or the rarer subtypes. Carers can directly connect to the bot via a phone, with responses transcribed via a speech-totext tool into a secure database. Documenting collateral history and a provisional diagnosis can assist a clinician in further discussions about the likely condition and potential treatment. There are plans to correlate chatbot diagnostic suggestions with established biomarkers. Direct public access to chatbot conclusions is not expected due to ethical issues (e.g., patient distress and potential exploitation by others).

- Headache clinics

The Walton Centre in Liverpool is developing a Chatbot for its headache service [5]. Headache is the presenting problem in around 40% of referrals to Neurology. This bot is designed to engage with patients referred by primary care. Symptom patterns suggestive of a space-occupying lesion, trigeminal autonomic cephalgia and acute subdural haematoma would be fast-tracked for specialist review. Significant reductions in waiting times (currently 3 months) could be expected. However, there is a risk of pushing the access bottleneck further downstream regards waits for investigations and treatment; an issue facing the memory chatbot project as well.

- Primary Care developments

Meanwhile, Primary Care services in the UK are developing an AI-based triage chatbot called the ‘Patches Care Navigator’ [6], which could be adapted for use in Accident and Emergency (A&E) departments, engaging with the group who might otherwise have accessed primary care. This care navigator is designed to identify ‘red flags’ and divert patients to other services, such as mental health and pharmacy, which could cause frustration for users seeking a ‘one-stop shop’ by attending A&E.

- Mitigating limitations of LLMs

Most LLM Chatbots replicate what a specialist clinician would typically diagnose, not necessarily commenting on diagnostic stability over time, prognosis, or the likelihood of treatment benefits. This would require a much larger dataset, such as that provided by the National Health Service Federated Data Platform (NHS-FDP). Palantir, an American firm, has been engaged to oversee the NHS-FDP, providing guidance on outcomes and responding to research questions.

- Back office and admin functions

Some pharmacy departments have adopted treatment algorithms and a ‘robotic arm’ to assist dispensing. AI-based radiology reporting (including early detection of strokes and tumours) is being embedded in some hospitals. Admin tasks such as timetabling, recording clinical activity, appointment management, and data retrieval could be reduced using relevant algorithms. LLM templates could generate discharge letters, ward round notes, and referral requests, which resident doctors could edit thereafter.

Helping junior doctors (see Box 1)

- Know how AI tools work and their limits.

- Accurate and logical prompts when asking AI clinical questions

- Checking AI responses with other sources (publications, guidelines, seniors)

- Integrating AI into clinical workflows and decision-making processes

- Explaining to patients the role of AI in their treatment • Learn how to train AI diagnostic / treatment chatbots

Box 1: AI competencies for doctors

It is estimated that resident doctors spend 4 hours on administrative work for every 1 hour spent with a patient [7]. Despite electronic records being widely available in primary and mental health care, including functionality to translate speech to text, most ‘acute’ hospitals largely rely on handwritten paper records. In any case, AI-driven transformation is more likely to focus on ‘hospital at home’ schemes linked to early discharge, despite the hopes of resident doctors.

A recent study of draft research papers found that ChatGPT made three times more corrections than a human editor, but with less clarity [8]. AI-based copyediting services are also expensive for researchers based in developing countries.

AI-based medical training and formative assessment

Other AI approaches are being developed, involving 3 models: Reinforcement Learning (RL), Bayesian modelling (BM), and Virtual ‘world’ modelling (VM), which are being evaluated for doctor training. RL enables learning from outcome feedback [9]. BM is best suited for situations with gradually emerging information, enabling the refinement of hypotheses and the tailoring of responses [10].VM, like gaming modules, creates a virtual environment; for example, a hospital ward, or an A&E department, for students to explore presentations. Combined RL, VM and BM modules (working as a ‘stack’) are being developed by content creators to allow trainee doctors to learn from experience without harming patients, reduce the need for face-to-face supervision, and provide immediate feedback. This approach is termed Simulation Training (ST), in which a scenario requiring the trainee’s interventions is followed by further scenarios that present choices with feedback based on the trainee’s decisions [11]. John Hopkins Medical School is offering an AI-based suturing skills course with live feedback and ratings. Furthermore, formative clinical assessment, such as Objective Structured Clinical Examinations (OSCEs), can be transferred to virtual modelling, providing consistent case study complexity and unbiased ratings.

Paradigm shift on outcome research

As an alternative to time-consuming and expensive randomised controlled outcome studies, it is now possible to use the NHSFDP to select statistically appropriate numbers of cases and controls with specific characteristics and interventions to detect outcomes rapidly. Also, ‘before and after’ and ‘crossover’ studies could be arranged. This AI functionality can reduce the need for the traditional grant allocation process, resulting in cost and time savings. Furthermore, emerging symptom patterns on NHS-FDP could also improve epidemiological research and assist pandemic planning.

Pitfalls and ethical issues of applying AI in healthcare

- Misuse of LLMs by medical students and resident doctors

There is increasing evidence that medical students and resident doctors are using ChatGPT as their sole source of advice on diagnoses and treatments, rather than review articles, institutional guidelines, or advice from specialist doctors [12]. This is potentially risky, as LLM modules prioritise being ‘agreeable’ (described by some authors as ‘sycophantic’) towards users over factual transparency; for example, not providing details on treatment side effect and not describing alternative diagnoses to be excluded. Outright misinformation by LLMs is not unknown, termed ‘hallucinations’ [13] risking litigation risks if patient applications cause harm. The problem appears to be illogical and biased prompts entered by users to elicit answers, suggesting a need for training on prompts to be more specific, alongside crosschecking other resources to ensure the validity of the information.

- Diagnostic biases in histopathology

On AI algorithmic assistance in histopathological cancer detection, systematic biases against positive diagnosis amongst ethnic minorities and younger individuals have been detected due to these groups not being included in the training process, with urgent remediation (‘tuning’) of the model underway [14].

- Data protection issues

In June 2024, the pathology database of the King’s Hospital Trust in London was breached by a group known as Quillin, which gained access via the partnering company’s IT router, and 900,000 patient records were posted on the dark web. This was accompanied by widespread cancellation of diagnostic clinics and delayed cold surgery. There was also a death of a patient linked with this outage. Synnovis, the private provider involved, conducted an 18-month review of the attack but could not identify the access pathway. • Litigation following deaths attributed to LLM’s

Currently, there are 7 claims against Chat GPT, due to alleged ‘AI Psychosis’ [15] and associated suicides following the individuals receiving harmful advice from the LLM. OpenAI, the parent company, has begun making changes by updating ChatGPT to recognise dangerous chats, while denying responsibility for the deaths. It now appears that using poetry as part of prompts can circumvent built-in LLM restrictions on providing harmful advice [16]. Extreme overvalued ideas in theology and politics could readily be reinforced by LLM’s amongst the socially isolated (including doctors), potentially resulting in harmful actions towards themselves or others.

- Job security

Realistically, due to the fear of job cuts caused by AI applications in hospitals alongside general inertia toward practice changes, delays in implementation could occur despite earmarked funding, saving jobs in the medium term.

The bigger issue is of ‘dumbing down’ of doctors who use ChatGPT and other LLMs extensively for clinical and academic work. This has been suggested by a recent study at the Massachusetts Institute of Technology (MIT), which examined neural activation in specific brain areas using electroencephalography, comparing heavy LLM users and non-LLM users [17]. The results suggested both reduced neural activation and psychometric deficits in executive and recall functions among heavy LLM users. These findings replicate those of previous authors [18]. Longer-term follow-up of cognitive skills in the heavy LLM user group is underway.

- Broader ethical considerations

If clinical algorithms are implemented without consultation, there is a risk of exacerbating pre-existing age and race biases in care delivery. This is ‘utilitarianism’, where the preferred choice is the one with the best empirical outcome. This philosophy risks removing priority given to applying the best interests of an individual patient.

Hospital managers focusing entirely on cost-cutting by transferring in-patients to ‘hospital at home ‘using AI telemedicine can also lead to ethical issues. Firstly, frail older patients with poor housing, social deprivation and mental health needs (such as dementia) could become stuck in hospital.

Secondly, resident doctors, overburdened with administrative workloads, experience poor job satisfaction and burnout, with consequences for retention. Therefore, ethically sound and diplomatic leadership is required to achieve a balanced allocation of resources among competing groups.

Conclusions (see Box 2)

- The most exciting aspect of AI applications in healthcare is case-control outcome research, with the NHS-FDB used to efficiently select cases and controls for comparing treatment effects. Epidemiological studies to detect symptom patterns are easier, especially during emerging pandemics.

- Simulation training for medical students and resident medical staff is also promising, as it offers flexible learning whilst protecting patients, saving staff time, and being commercially beneficial for content creators.

- Resident doctor satisfaction rates will improve with AI assistance for administrative duties, such as ward-round notetaking and the retrieval of investigation reports. However, heavy reliance on LLMs in clinical practice could lead to a loss of critical thinking and independent decision-making.

- In response to the inappropriate use of LLMs to seek guidance on diagnoses, treatments, and prognostications, there needs to be training in the safe and effective use of LLMs for all hospital doctors, with universities taking responsibility for training their medical students.

- There needs to be state oversight to encourage (or coerce) parent companies of LLMs to prioritise patient and user safety. Currently, chatbots are not regulated in the UK under the 2023 online safety legislation; this may require secondary legislation to address the deficit.

Key Points

- AI is already being incorporated into hospital care, as described in a recent WHO report.

- Skill-based training for resident doctors via virtual scenariobased modules, can be more flexible for personal learning.

- Transformation of case-controlled outcome research is anticipated via interrogation of primary care databases.

- Clinical AI applications are likely to be prioritised on hospitalat-home schemes partly to cut costs of hospital bed use

- Resident doctors who are heavy users of Large Language Models like ChatGPT risk losing critical thinking skills and the ability to innovate.

Author Contributions: PDS wrote the manuscript with content provided by LMD and AJJ. All authors read and approved the final manuscript. All authors have participated sufficiently in the work and agreed to be accountable for all aspects of the work.

Ethics Approval and Consent to Participate: Not applicable.

Declaration of AI-assisted Technologies in the Writing Process: This article was written without AI assistance.

Conflicts of Interest: The authors declare no conflicts of interest.

Funding – Not applicable

Declaration of Interests: None

References

- Turing AM (1950) Computing machinery and intelligence. Mind. 49: 433-460.

- Roser M (2022) – ‘The brief history of artificial intelligence: the world has changed fast; what might be next?’ Published online at OurWorldinData.org.

- Goertzel B (2014) Artificial General Intelligence: Concept, State of the Art, and Future Prospects. Journal of Artificial General Intelligence, 5:1-48.

- WHO Europe (2025) Artificial intelligence is reshaping health systems: state of readiness across the WHO European Region: WHO Regional Office for Europe: Licence: CC BY-NC-SA 3.0 IGO.

- Davis R (2026) Conversational chatbot helps alleviate strain for Walton Centre – Walton Centre migraine conversational chatbot case study.

- Derere G (2025) Introduction to Patches AI. Patches Health.

- Arab S, Chhatwal K, Hargreaves T, Sorbini M, El-Toukhy S, et al. (2025) Time Allocation in Clinical Training (TACT): national study reveals Resident Doctors spend four hours on admin for every hour with patients, QJM, 118:753-759.

- August E, Gray R, Griffin Z, Klein M, Buser JM, et al. (2026) Does ChatGPT enhance equity for global health publications? Copyediting by ChatGPT compared to Grammarly and a human editor. PLoS One. 21 :e0342170.

- Otten M, Jagesar AR, Dam TA, Biesheuvel LA, F den Hengst F, et al. (2024) Does Reinforcement Learning Improve Outcomes for Critically Ill Patients? A Systematic Review and Level-of-Readiness Assessment. Crit Care Med. 52: e79-e88.

- McLachlan S, Dube K, Hitman GA, Fenton NE, Kyrimi E (2020) Bayesian networks in healthcare: Distribution by medical condition, Artif Intell Med. 107:101912.

- Hamilton A (2024) Artificial Intelligence and Healthcare Simulation: The Shifting Landscape of Medical Education. Cureus, 16: e59747.

- Abdelhafiz AS, Farghly MI, Sultan EA, Abouelmagd ME, Ashmawy Y, et al. (2025) Medical students and Chat GPT: analysing attitudes, practices and academic perceptions. BMC Med. Educ: 25: 187.

- Farquhar S, Kossen J, Kuhn L, Gal Y (2024) Detecting hallucinations in large language models using semantic entropy. Nature, 630:625630.

- Lin SY, Tsai PC, Su SY, Chen CY, Li E, et al. (2025) Contrastive learning enhances fairness in pathology artificial intelligence systems. Cell Rep Med. 6:102527.

- Preda A (2025) AI-induced psychosis: a new frontier in mental health. Psychiatric News: 60: 10.

- Bisconti P, Prandi M, Pierucci F, Giarrusso F, Syrnikov MB, et al. (2025) Adversarial poetry as a universal single-turn jailbreak mechanism in Large Language Models.

- Kosmyna N, Hauptmann E, Yuan YT, Situ J, Liao XH, et al. (2025) Your brain on ChatGPT: Accumulation of cognitive debt when using an ASI assistant for essay writing tasks. arXiv preprint.

- Stadler M, Bannert M, Sailer M (2024) Cognitive ease at a cost: LLMs reduce mental effort but compromise depth in student scientific inquiry. Computers in Human Behaviour: 160: 108386.

© by the Authors & Gavin Publishers. This is an Open Access Journal Article Published Under Attribution-Share Alike CC BY-SA: Creative Commons Attribution-Share Alike 4.0 International License. Read More About Open Access Policy.